Tag: TensorRT

How Quantization Aware Training Enables Low-Precision Accuracy Recovery

0

October 3, 2025

0

Deploy High-Performance AI Models in Windows Applications on NVIDIA RTX AI PCs

0

September 28, 2025

0

NVIDIA AI Inference Backends

0

September 28, 2025

0

BOXER-8741AI: NVIDIA Jetson T5000

0

September 18, 2025

0

Introducing NVIDIA Jetson Thor, the Ultimate Platform for Physical AI | NVIDIA Technical Blog

0

September 5, 2025

0

Ultralytics YOLO11

0

October 8, 2024

0

Access to NVIDIA NIM Now Available Free to Developer Program Members

0

July 30, 2024

0

Generate Traffic Insights Using YOLOv8 and NVIDIA JetPack 6.0 | NVIDIA Technical Blog

0

June 21, 2024

0

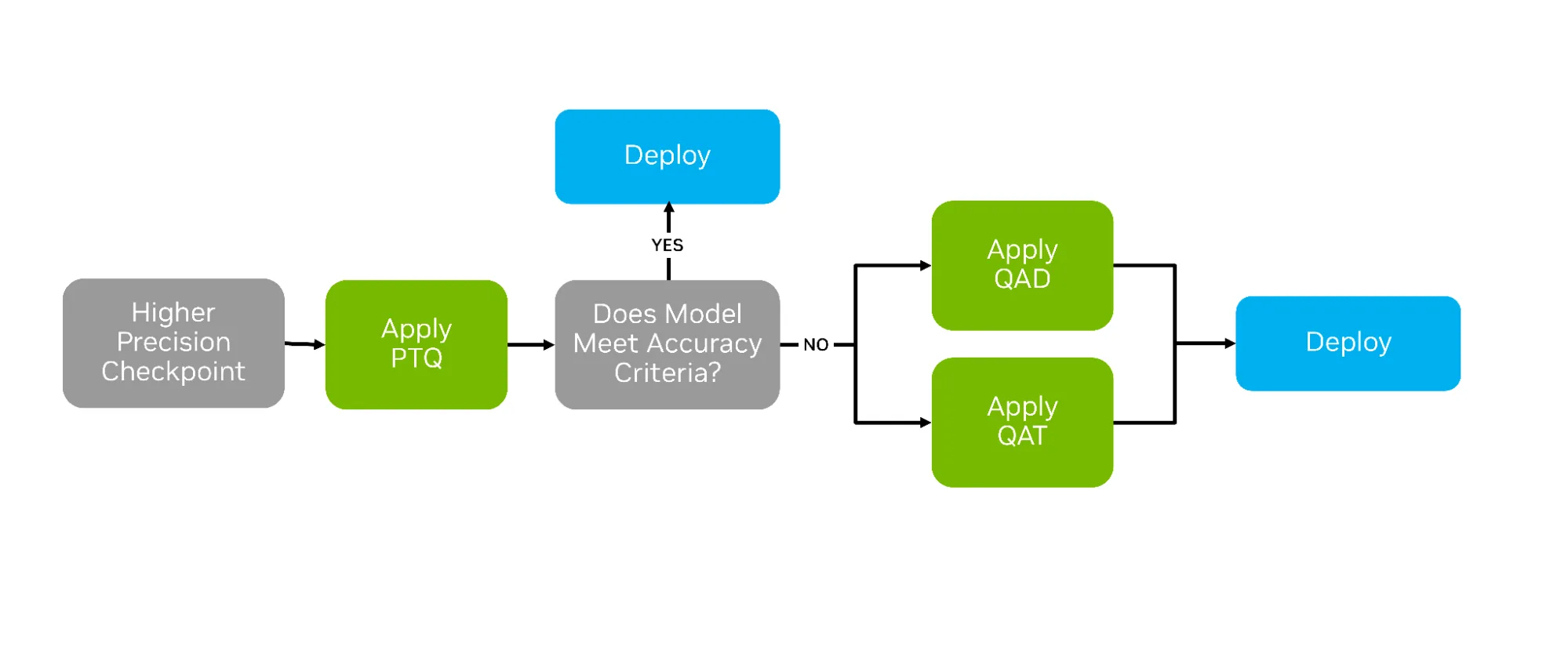

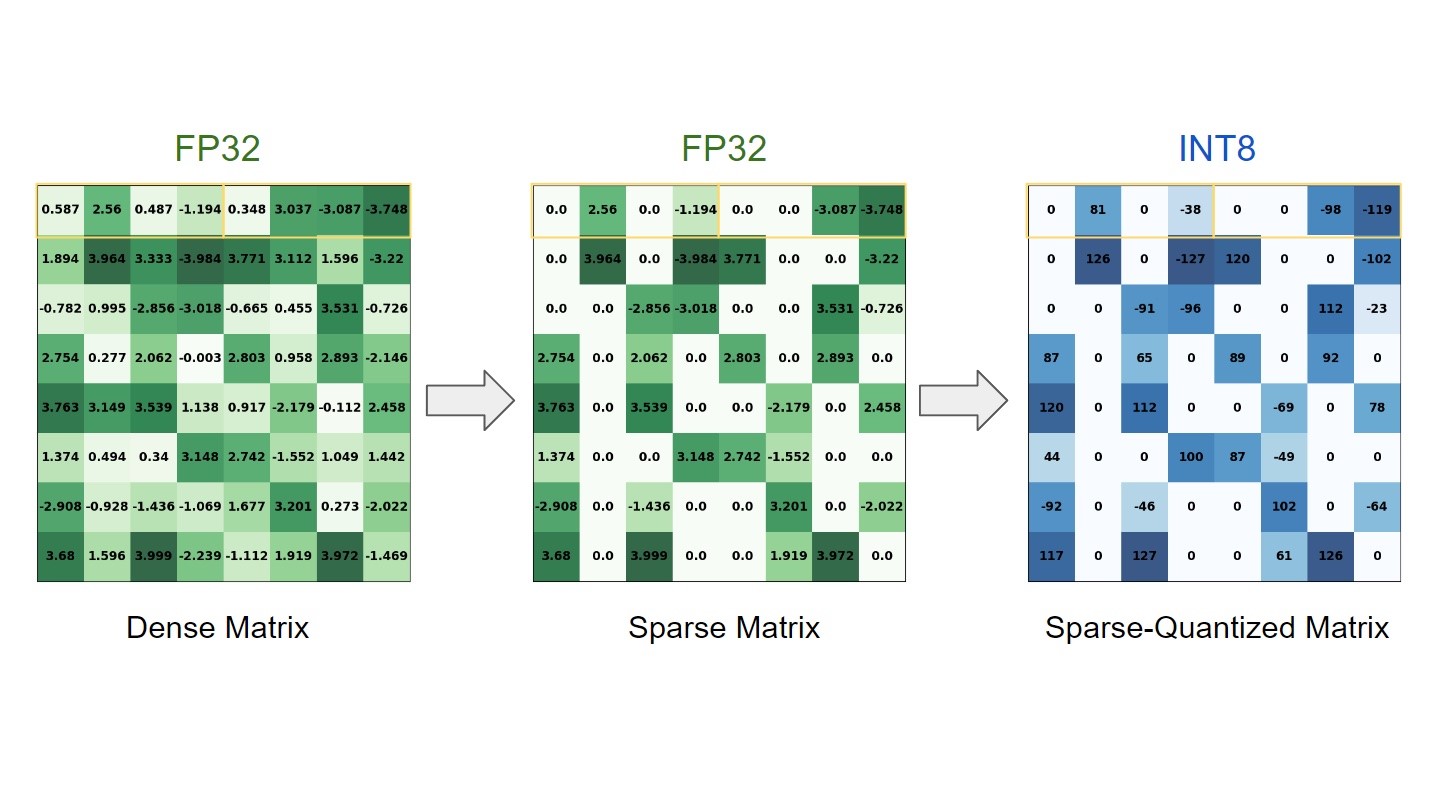

Sparsity in INT8: Training Workflow and Best Practices for NVIDIA TensorRT Acceleration

0

June 3, 2023

0

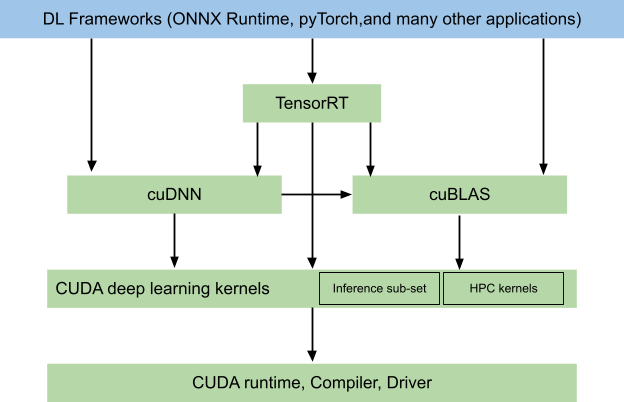

End-to-End AI for NVIDIA-Based PCs: CUDA and TensorRT Execution Providers in ONNX Runtime

1

February 14, 2023

0

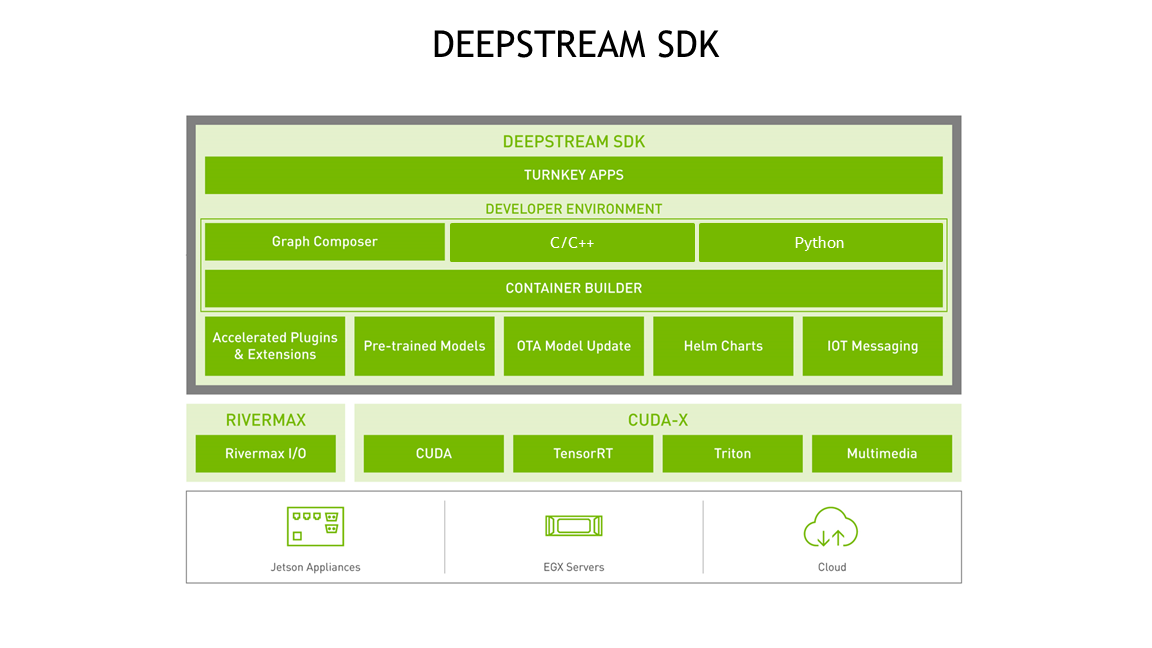

Get Started With the NVIDIA DeepStream SDK

0

February 6, 2023

0

YOLOv7: YOLO with Transformers and Instance Segmentation, with TensorRT acceleration!

0

June 29, 2022

0

Real-Time Object Detection with DeepStream on Nvidia Jetson AGX Orin

0

June 14, 2022

0

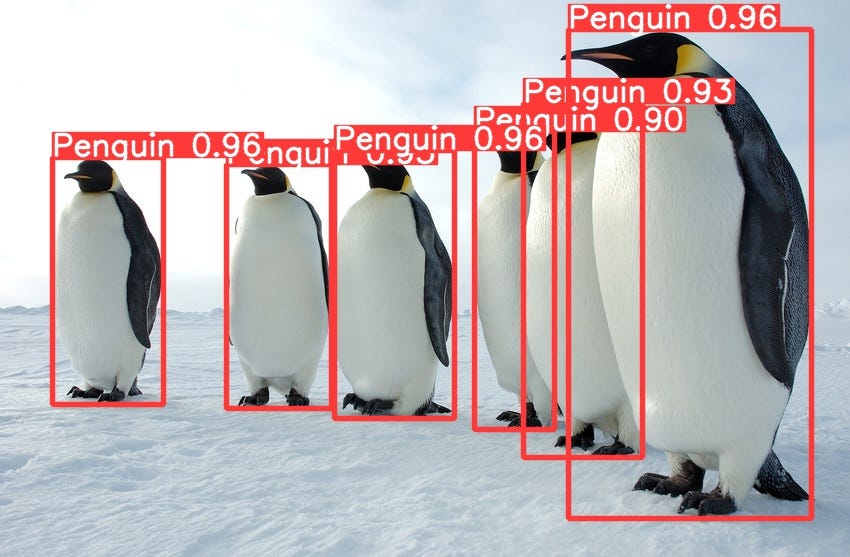

The practical guide for Object Detection with YOLOv5 algorithm

0

April 2, 2022

0

NVIDIA Announces TensorRT 8.2 and Integrations with PyTorch and TensorFlow

1

December 6, 2021

0

NVIDIA Announces TensorRT 8 Slashing BERT-Large Inference Down to 1 Millisecond

0

July 30, 2021

0

Using MATLAB and TensorRT on NVIDIA GPUs | NVIDIA Developer Blog

0

August 25, 2019

0

NVIDIA open sources parsers and plugins in TensorRT – NVIDIA Developer News Center

0

June 23, 2019

0