NVIDIA Announces TensorRT 8 Slashing BERT-Large Inference Down to 1 Millisecond

NVIDIA Announces TensorRT 8 Slashing BERT-Large Inference Down to 1 Millisecond | NVIDIA Developer Blog

“NVIDIA announced TensorRT 8.0 which brings BERT-Large inference latency down to 1.2 ms with new optimizations. This version also delivers 2x the accuracy for INT8 precision with Quantization Aware Training, and significantly higher performance through support for Sparsity, which was introduced in Ampere GPUs.

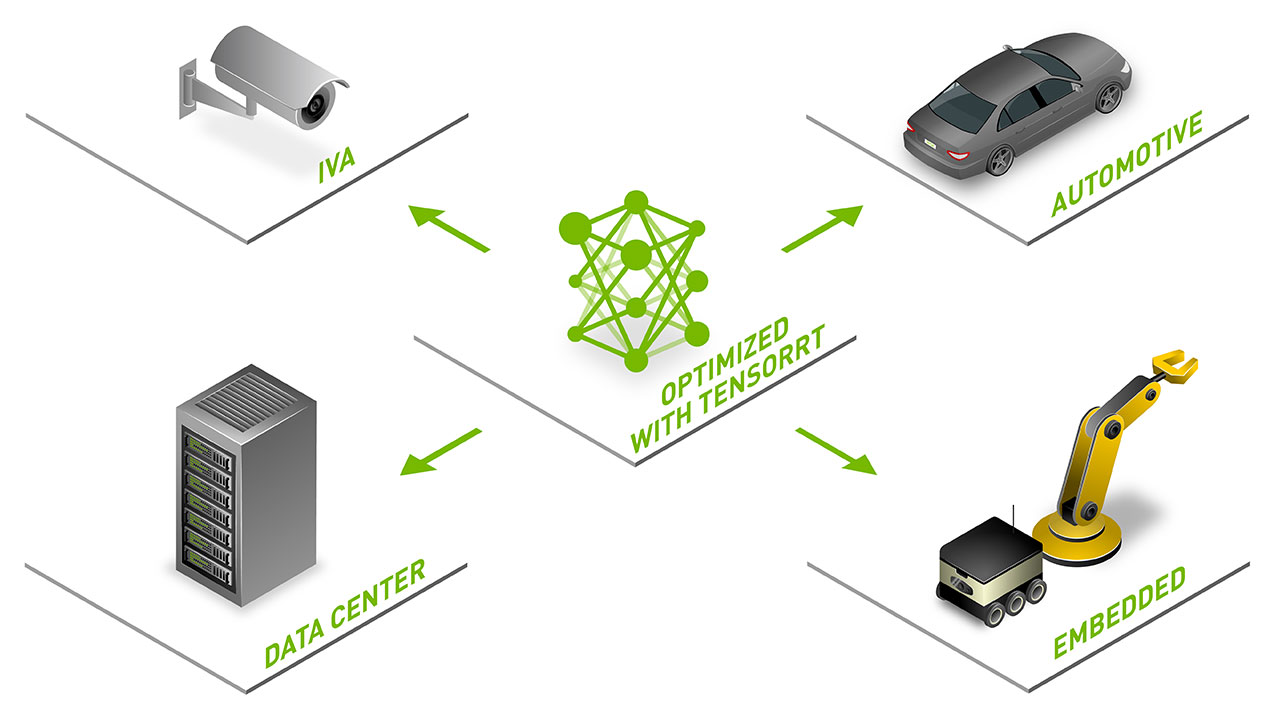

TensorRT is an SDK for high-performance deep learning inference that includes an inference optimizer and runtime that delivers low latency and high throughput. TensorRT is used across industries such as Healthcare, Automotive, Manufacturing, Internet/Telecom services, Financial Services, Energy, and has been downloaded nearly 2.5 million times…”

July 30, 2021

Subscribe

Login

Please login to comment

0 Comments