Tag: LLM

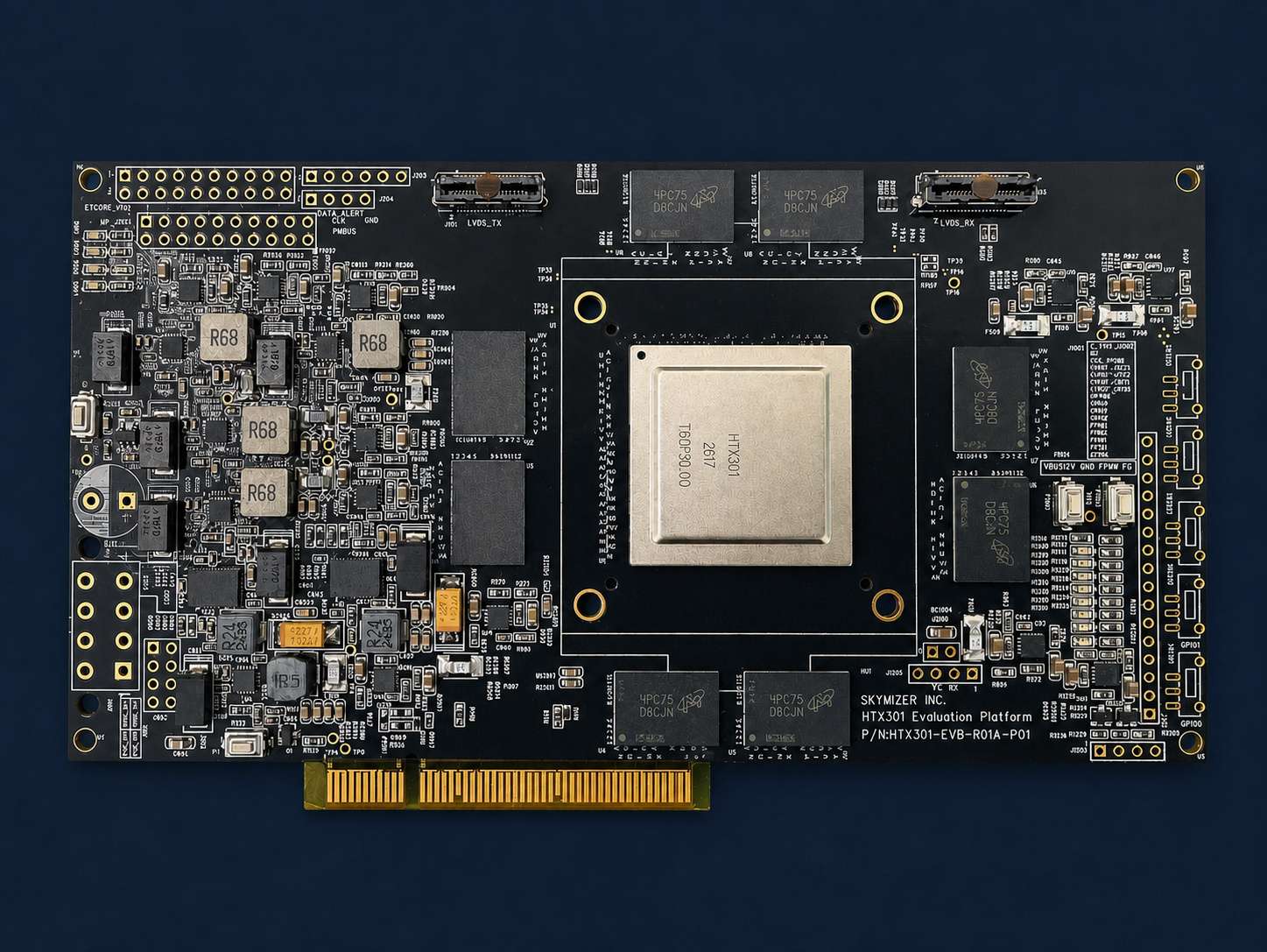

Skymizer Announces HTX301 — Reinventing On-Prem AI Inference

0

May 13, 2026

0

Introducing NVIDIA Nemotron 3 Nano Omni: Long-Context Multimodal Intelligence for Documents, Audio and Video Agents

0

May 4, 2026

0

NVIDIA Nemotron 3 Nano Omni Powers Multimodal Agent Reasoning in a Single Efficient Open Model

0

May 2, 2026

0

Gemini Robotics-ER 1.5

1

October 9, 2025

0

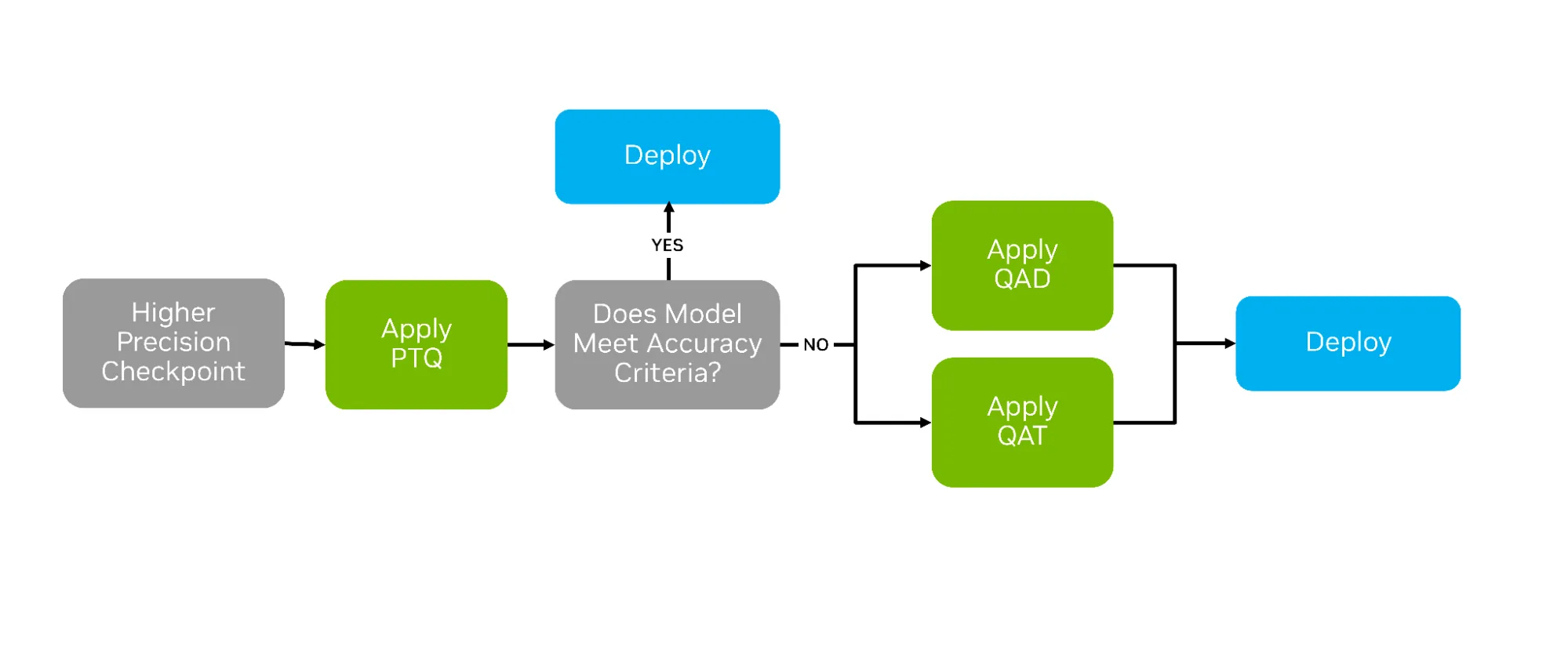

How Quantization Aware Training Enables Low-Precision Accuracy Recovery

0

October 3, 2025

0

How to Integrate Computer Vision Pipelines with Generative AI and Reasoning

0

September 27, 2025

0

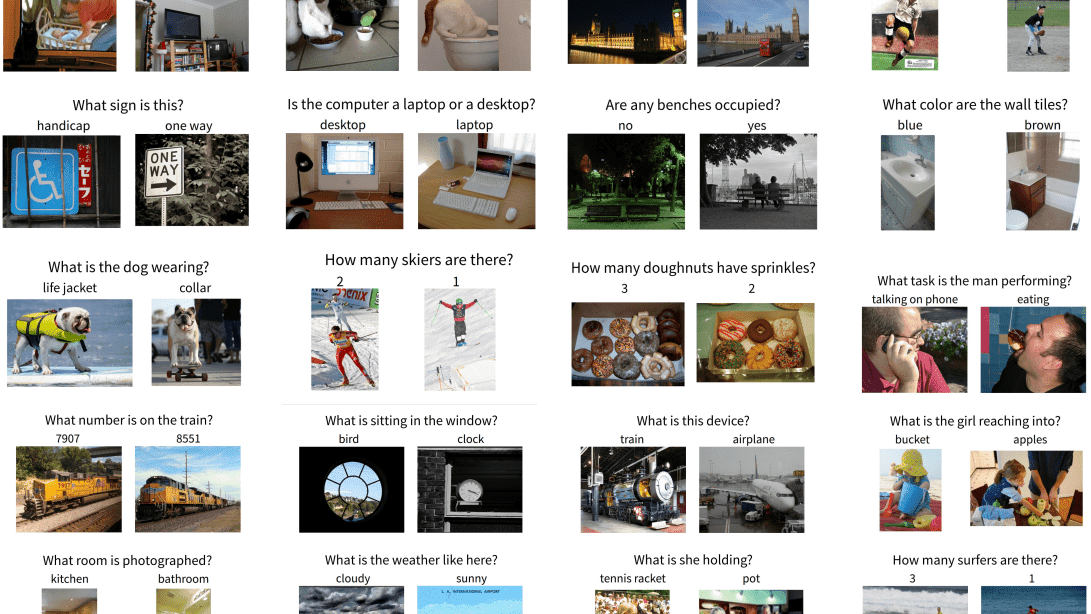

The Ultimate Guide To VLM Evaluation Metrics, Datasets, And Benchmarks

0

September 25, 2025

0

MiniCPM-V 4.5: A GPT-4o Level MLLM for Single Image, Multi Image and High-FPS Video Understanding on Your Phone

1

September 24, 2025

0

Reasoning Through Molecular Synthetic Pathways with Generative AI

0

September 24, 2025

0

How Small Language Models Are Key to Scalable Agentic AI

0

September 16, 2025

0

LLMs-from-scratch: Implement a ChatGPT-like LLM in PyTorch from scratch, step by step

0

September 14, 2025

0

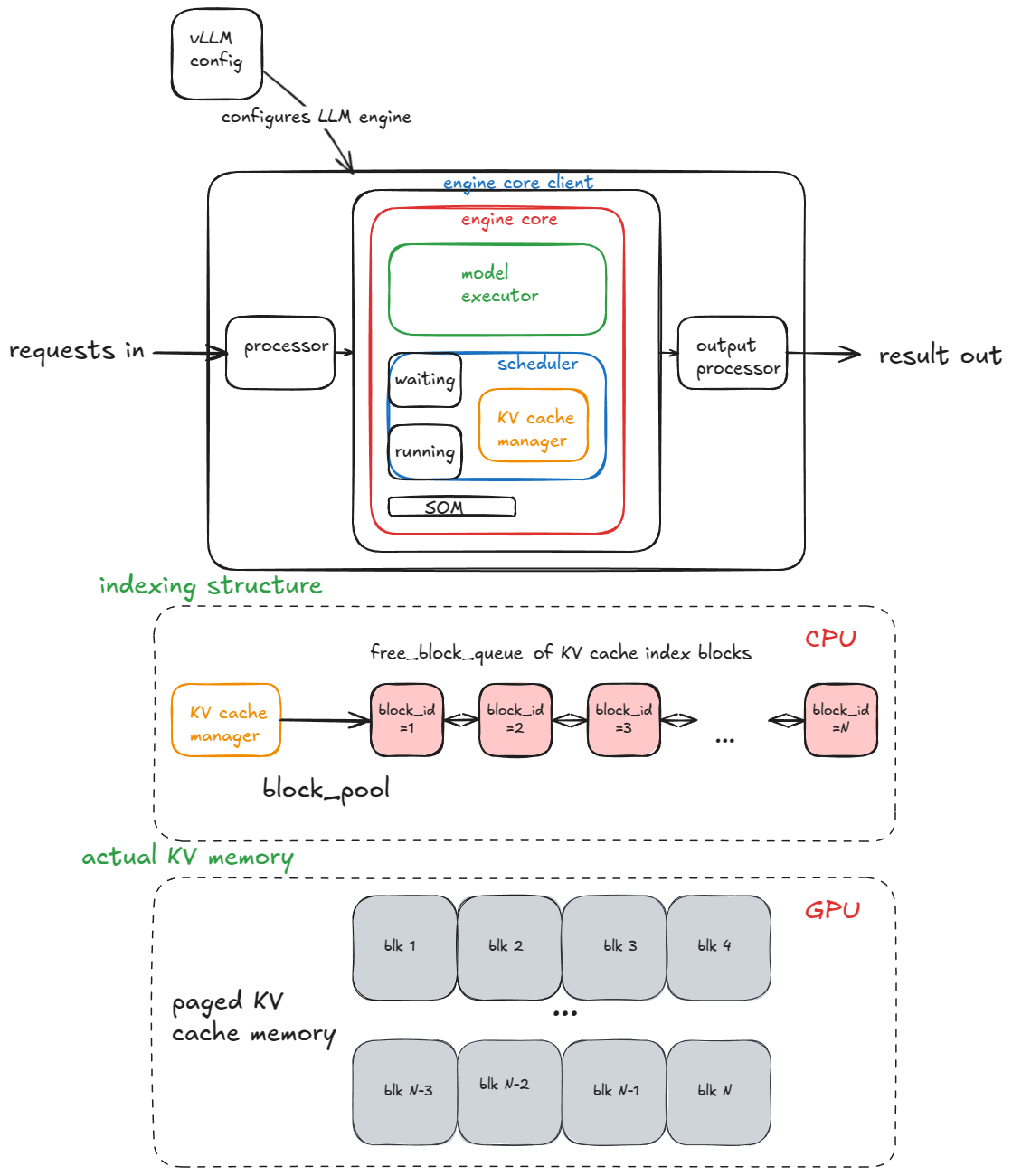

Inside vLLM: Anatomy of a High-Throughput LLM Inference System

1

September 9, 2025

0

SmolVLA: Efficient Vision Language Action Model – LeRobot

1

September 9, 2025

0

Cut Model Deployment Costs While Keeping Performance With GPU Memory Swap

0

September 7, 2025

0

The Story of BIX, Built with NVIDIA AI

0

January 24, 2025

0

Build VLM-Powered Visual AI Agents Using NVIDIA NIM and NVIDIA VIA Microservices

0

July 31, 2024

0

Access to NVIDIA NIM Now Available Free to Developer Program Members

0

July 30, 2024

0

Advancing Security for Large Language Models with NVIDIA GPUs and Edgeless Systems

0

July 10, 2024

0

Addressing Hallucinations in Speech Synthesis LLMs with the NVIDIA NeMo T5-TTS Model

0

July 9, 2024

0

Mastering LLM Techniques: Inference Optimization | NVIDIA Technical Blog

0

July 2, 2024

0

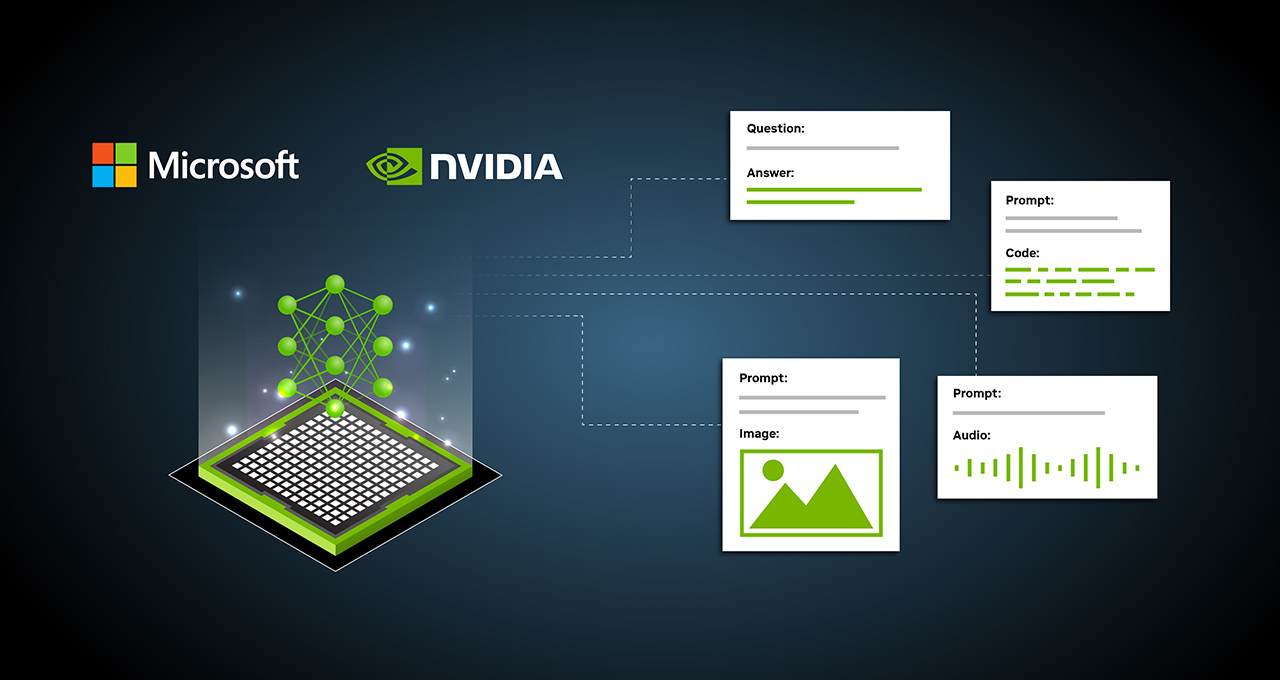

Microsoft drops Florence-2, a unified model to handle a variety of vision tasks

0

June 23, 2024

0

NVIDIA Releases Open Synthetic Data Generation Pipeline for Training Large Language Models

0

June 18, 2024

0

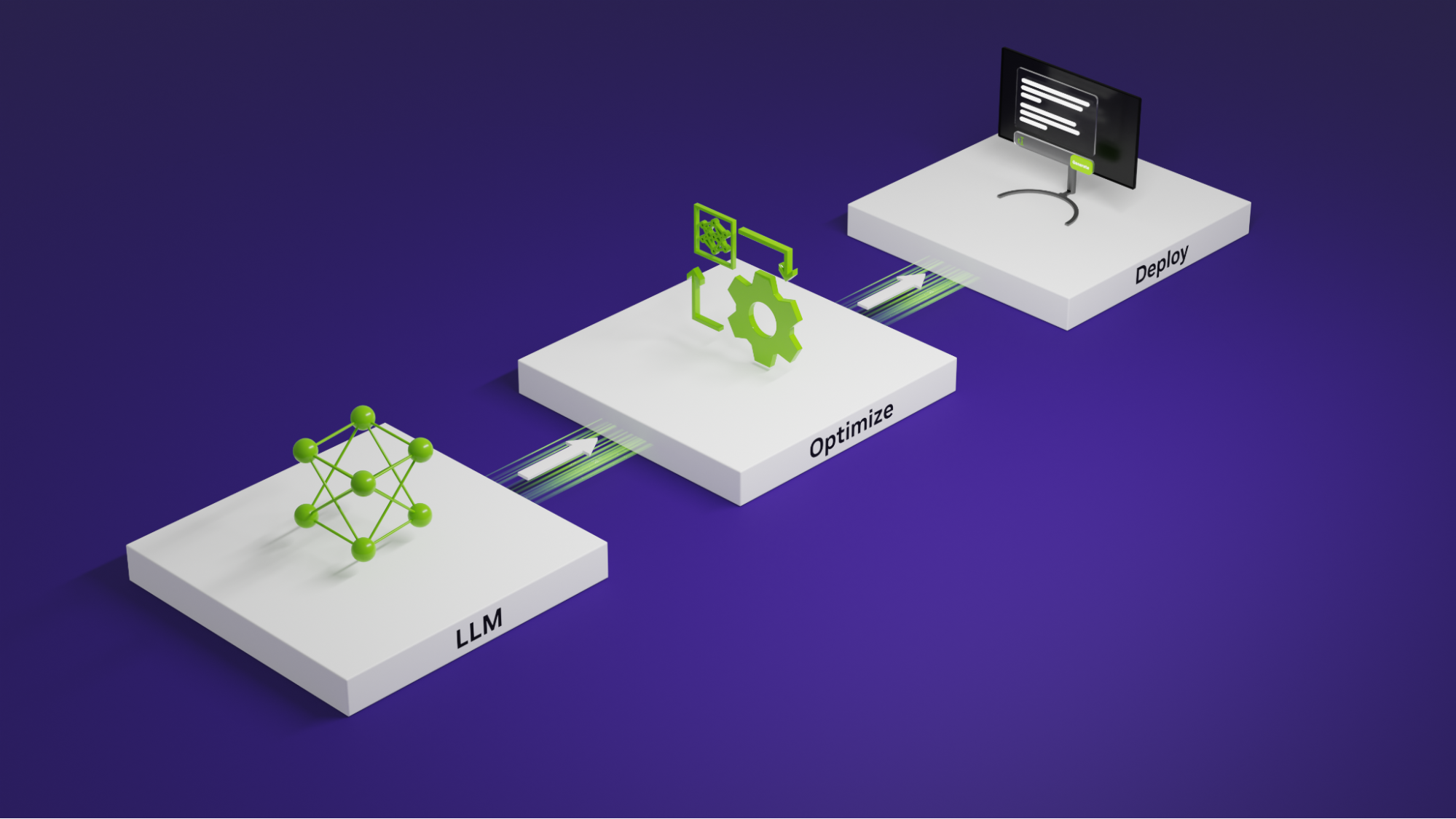

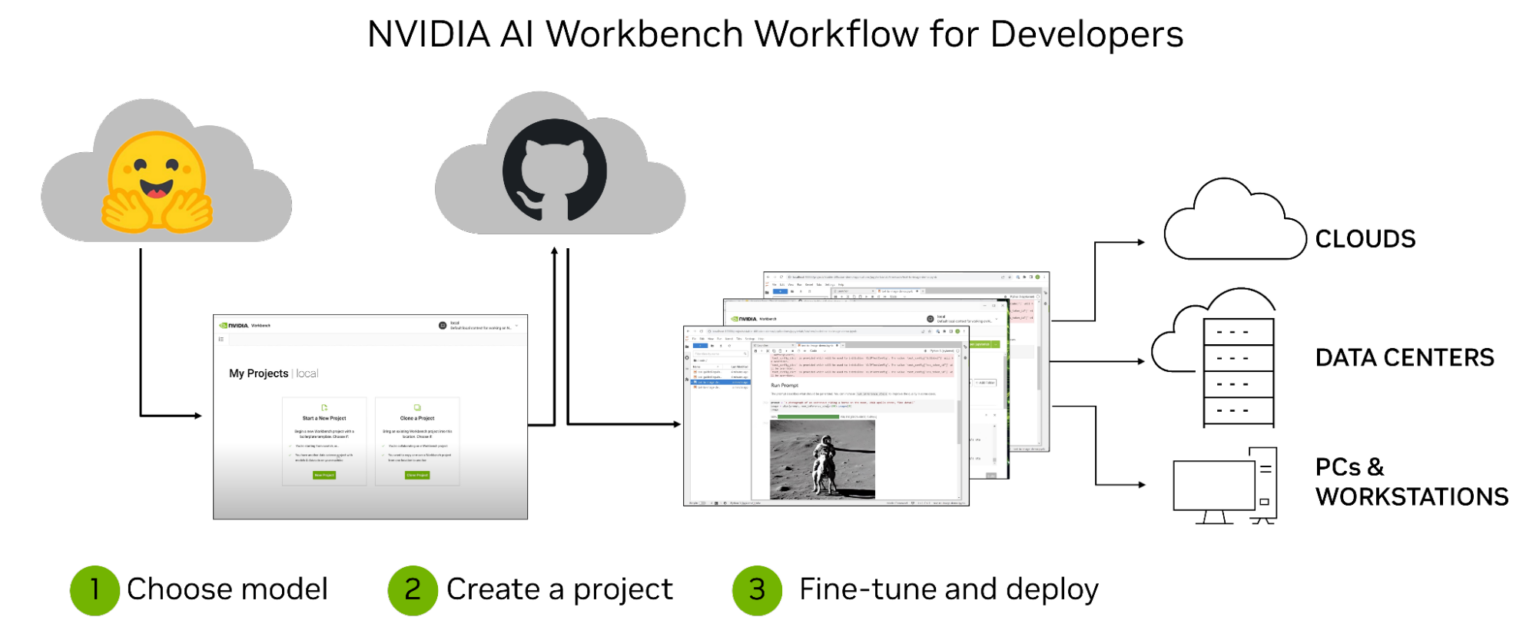

Develop and Deploy Scalable Generative AI Models Seamlessly with NVIDIA AI Workbench

0

August 24, 2023

0

LLM Powered Autonomous Agents

0

July 2, 2023

0

Driving Innovation for Windows PCs in Generative AI Era

0

June 5, 2023

0

LangChain: framework for developing applications powered by language models

0

June 2, 2023

0