The Ultimate Guide To VLM Evaluation Metrics, Datasets, And Benchmarks

“Vision-Language Models (VLMs) are powerful and figuring out how well they actually work is a real challenge. There isn’t one single test that covers everything they can do. Instead, we need to use the right datasets and the right VLM Evaluation Metrics.

…

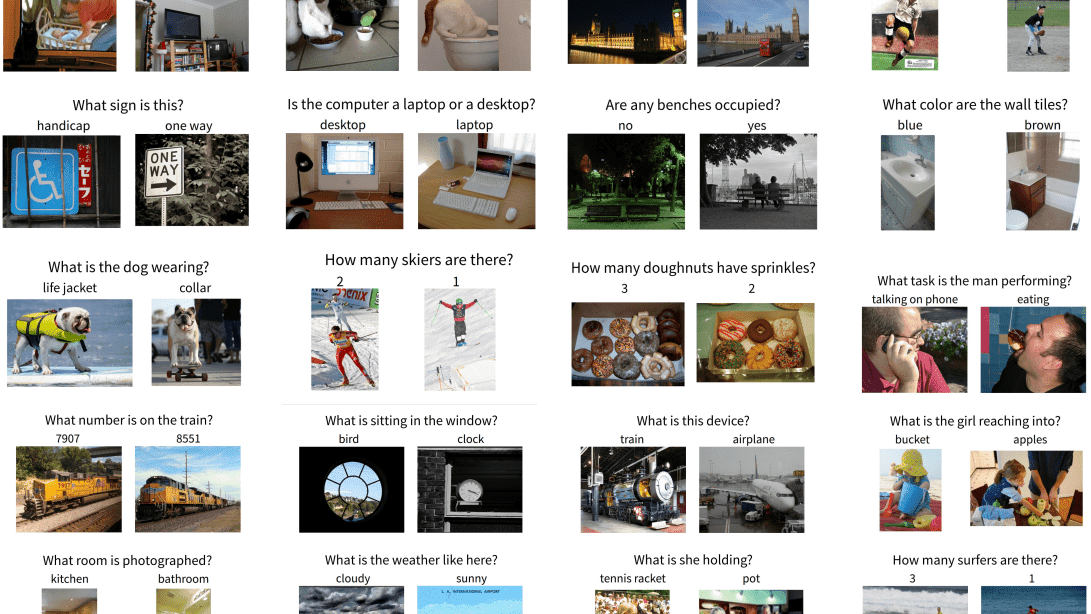

Evaluation of VLMs is not as simple as evaluating a vision or object detection model. It requires task-wise datasets and corresponding evaluations. The reason is simple. A model which excels in logical reasoning needed for VQA might struggle with the semantic richness required for high-quality image captioning. Similarly, the skills needed to read an invoice are different from pinpointing an object in a cluttered scene.

We will go through various tasks, respective datasets and essential VLM evaluation metrics. Continuing with Python script to calculate the BLEU score on smolVLM-instruct model…”

Source: https://learnopencv.com/vlm-evaluation-metrics/