Purpose-Built Inference Acceleration Takes AI Everywhere – Intel AI

Purpose-Built Inference Acceleration Takes AI Everywhere – Intel AI

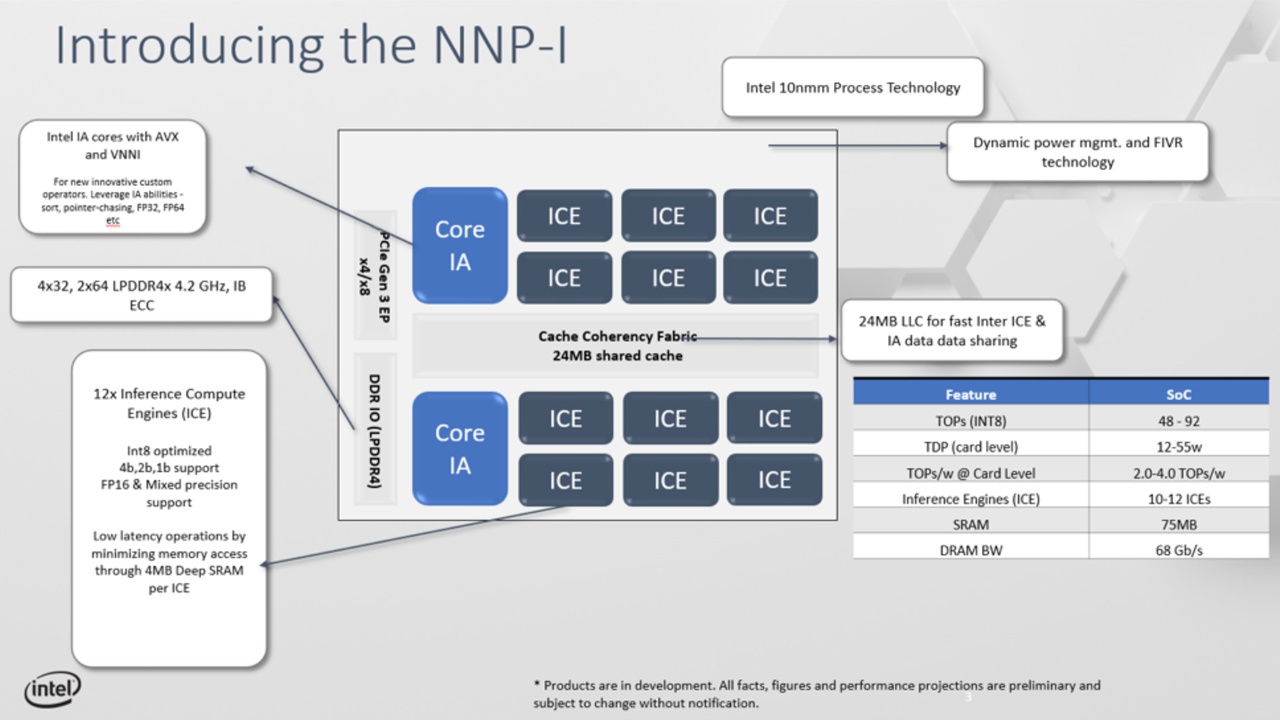

The Intel Nervana Neural Network Processor for Inference is architected for the most demanding and intense inference workloads. Designed from the ground up, this dedicated accelerator offers a high degree of programmability without compromising performance-to-power efficiency and is built to satisfy the needs of enterprise-scale AI deployments.Digging into the architecture itself reveals why the Intel Nervana NNP-I is so efficient, and why it’s capable of tackling AI inference at scale.

January 14, 2020

Subscribe

Login

Please login to comment

0 Comments